電腦視覺與虛擬實境組

COMPUTER VISION & VR GROUP

TARGETS

Vision Group

INTELLIGENT TRANSPORTATION SYSTEM

ASSISTIVE ALARMING SYSTEM

PEDESTRIAN & VEHICLE DETECTION

VR Group

ACTION RECOGNITION

HUMAN-COMPUTER INTERACTION

AUGMENTED REALITY

RESEARCH

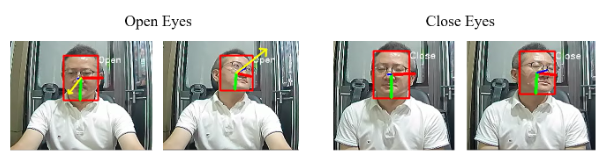

基於多任務之人臉資訊偵測深度學習模型

A Deep Multi-task Model for Face Information Detection

本論文提出了第一個以全臉影像作為輸入同時進行頭部姿態估計、眼睛狀態偵測和視線狀態估計的深度學習模型。我們使用多任務學習的方式,以單一模型進行推論,進而避免使用多個模型進行預測時需要大量記憶體的情況。為了能讓棋型在每個任務分支中能夠分辨出自身需要的特徵,我們設計跨維度注意力模組來為每個分支增強並篩選出對於各自任務所需要的特徵。此外,為了解決開閉眼狀態資料集沒有包含多角度頭部姿態的問題,我們提出生成具有眼睛狀態標註資料的資料擴增技術,提升模型在不同角度頭部姿態開閉眼偵測的穩定性。

In this paper, we present a lightweight multi-task deep learning model that utilizes full-face images to detect head pose, gaze direction, and eye state simultaneously. We propose a task-based cross-dimensional attention module that selectively filters and enhances relevant features for each specific task. Additionally, to tackle the lack of diverse head pose angles in the eye state dataset, we introduce a data augmentation technique to generate eye state data under different head poses, enhancing the model's robustness in detecting eye states under varying head poses.

基於深度學習之強健性即時道路標記偵測系統

Deep Learning-based Robust Real-time Road Marking Detection System

本論文提出了一種包含兩個階段的深度學習系統來解決現有偵測架構中難以辨識高度扭曲的路面標記以及難以平衡召回率以及準確率的路面標記偵測架構,本系統可在各種環境下即時準確的偵測地面標記。本論文還建立了一個新的路面標記偵測與分類的基準,收集的資料庫包含11800張高解析度影像。這些影像是在不同的時間和天氣狀況下拍攝於臺北的道路,並且手工標記出13種列別的候選框。實驗證明本論文提出的架構在路面標記偵測的任務中超過其他物件偵測架構。

In recent years, Autonomous Driving Systems (ADS) become more and more popular and reliable. Road markings are important for drivers and advanced driver assistance systems better understanding the road environment. While the detection of road markings may suffer a lot from various illumination, weather conditions and angles of view, most traditional road marking detection methods use fixed threshold to detect road markings, which is not robust enough to handle various situations in the real world. To deal with this problem, some deep learning-based real-time detection frameworks such as Single Shot Detector (SSD) and You Only Look Once (YOLO) are suitable for this task. However, these deep learning-based methods are data-driven while there are no public road marking datasets. Besides, these detection frameworks usually struggle with distorted road markings and balancing between the precision and recall. We propose a two-stage YOLOv2-based network to tackle distorted road marking detection as well as to balance precision and recall. Our network is able to run at 58 FPS in a single GTX 1070 under diverse circumstances. In addition, we propose a dataset for the public use of road marking detection tasks. The dataset consists of 11800 high resolution images captured at distinct time under different weather conditions. The images are manually labeled into 13 classes with bounding boxes and corresponding classes. We empirically demonstrate both mAP and detection speed of our system over several baseline models.

多感測器前端融合之深度學習應用於自駕車

A research on early fusion based on multi-sensor

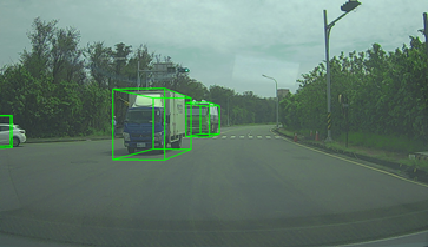

將多感測器間的資訊透過透過深度學習各自進行特徵擷取,各感測器擷取的特徵投影製相同的座標系,將這些特徵在特定通道上串接在一起得到融合後的特徵 ,進行前端的融合後,各感測器間可以利用彼此的優點截長補短,以得到良好的特徵。我們發展出一連串以電腦視覺(computer vision)為基礎的技術,其技術應用於障礙物偵測(行人或車輛偵測)。

The information between multiple sensors is collected through deep learning for individual feature collection. The features collected by each sensor are projected to the same standard system, and these features are connected to a specific channel to obtain the fused feature. After the early fusion, each sensor can take advantage of each other to cut short complements to obtain good features. We have developed a series of techniques based on computer vision techniques are applied on obstacle detection (such as pedestrian or vehicle detection).

個人化的動作辨識

User-Specific Motion Recognition

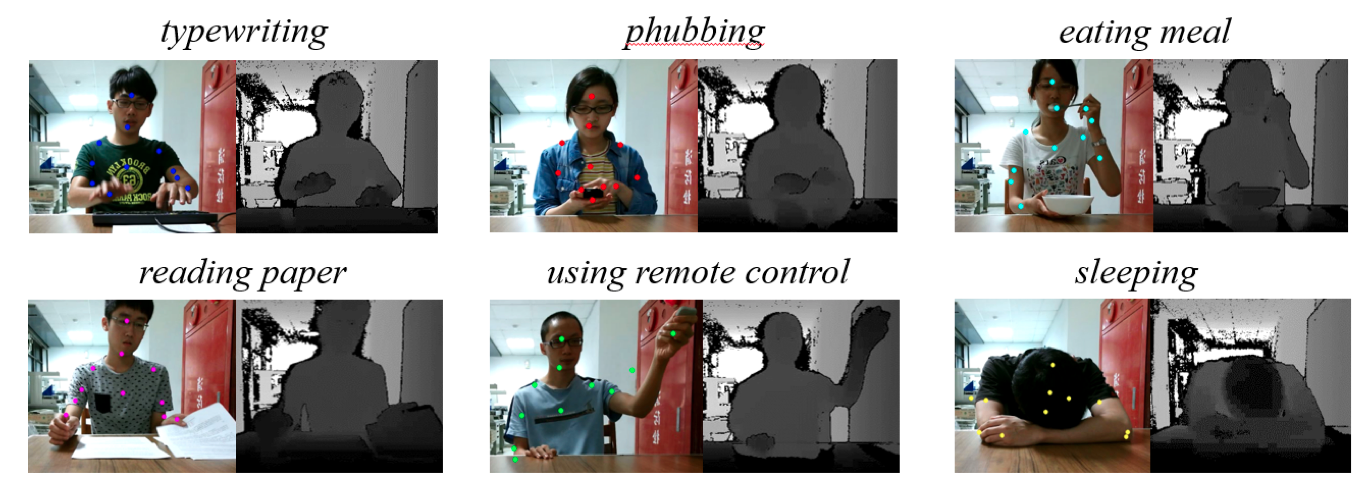

個人化動作辨識研究之目的在於想要建立一個可靠的通用型動作辨識系統,其中之困難處在於適應不同使用者有不同的動作習慣與外觀,針對這些難題尋求解決之道,則將可以迅速有效地建立個人化的動作辨識系統,進而運用在即時的互動應用,能以動作為輸入訊號融合於仰賴固定型態動作辨識的系統,提升未來日常生活上的便利性以及在虛擬實境的延伸技術上有多方面的發展。

This research focuses on developing a reliable and general action-recognition (AR) system, which is difficult to adapt various appearance and action styles of different users. Many future applications rely on this technique such as the real-time Human-Machine Interaction (HCI) systems due to the significance of identifying the input signal of a certain motion pattern, and are potentially used in extension techniques of AR as well as enhance the convenience of life.

虛擬實境

Virtual Reality

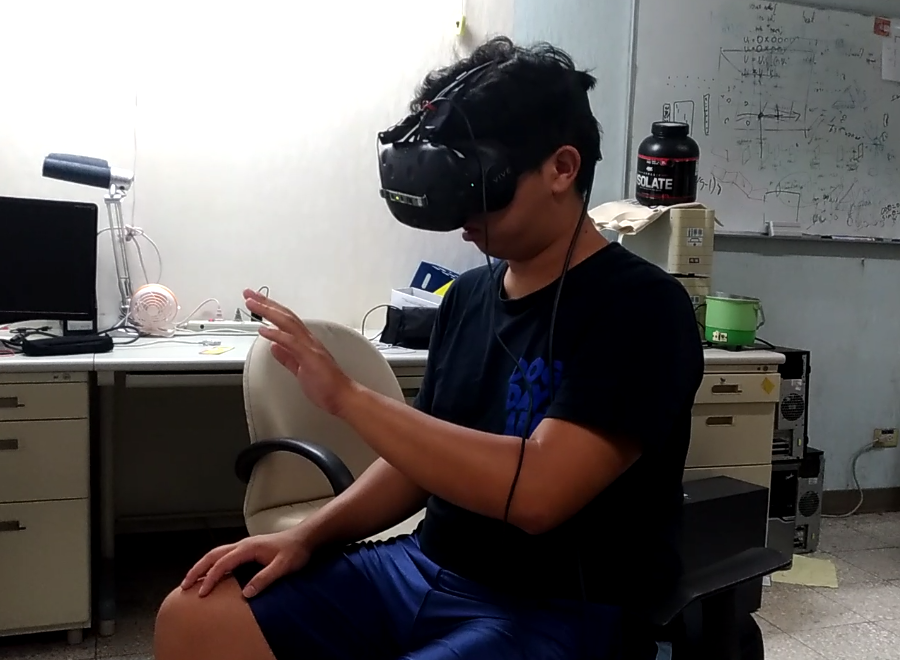

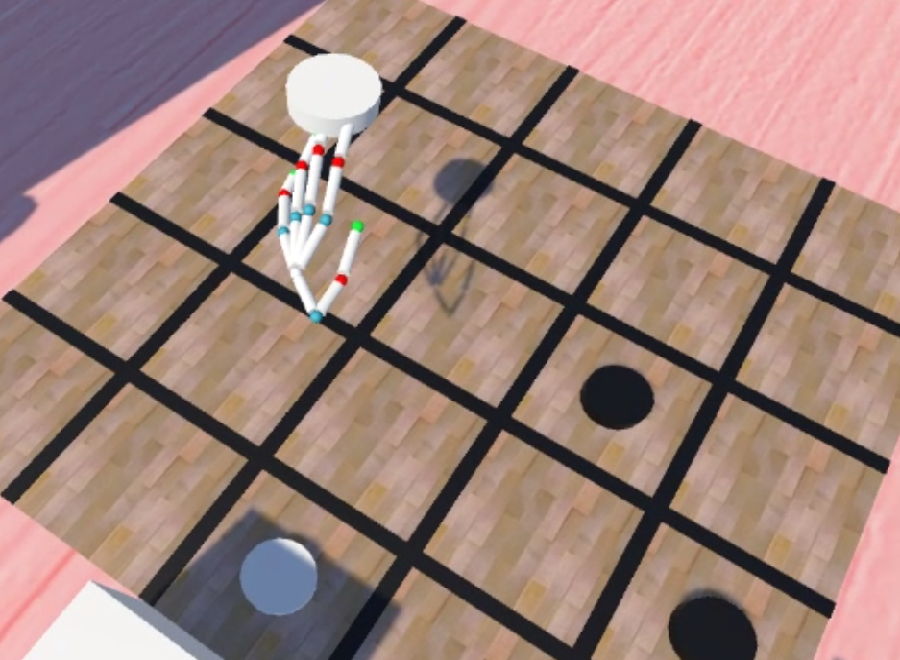

目前致力於手勢辨識與人機互動的應用,主要想要提供的是與電腦以及虛擬環境自然互動的方法。其中的困難之處在於手部多樣性的動作,手指與手指之間互相的遮蔽以及深度攝影機取得之影像中的缺失。在整個應用的流程中,首先以架設在頭盔上的深度攝影機取得影像,並以深度學習計算出手的關節在空間中的座標,並投影在虛擬環境中,並以此作為與虛擬物品互動的根據。

This research is now focusing on hand pose estimation and human-computer interaction, and we would like to propose a method to provide a natural way to interaction with the computer and the virtual environment. The difficulty of this includes hand pose variations, self-occlusion and depth image broken. The process of our application is listed below: we will first get the depth image from the depth camera which is set on the helmet, then estimation the 3D coordinates of the hand joint by deep learning. Finally, project the joint coordinates in to the virtual environment and interact with the object.

適合自動駕駛車輛之結合邊緣資訊即時影像語意分割系統

Real-Time Semantic Segmentation with Edge Information for Autonomous Vehicle

在先進駕駛輔助系統 (ADAS)中,其中一項基本功能需求是藉由影像切割功能找到車輛可以行駛的區域。有別於傳統影像切割方法,採用語意分割的深度學習網路架構,可以更正確辨識不規則的道路區域,指引自駕車行駛在更複雜的道路環境中。本研究中,首先分析最先進的即時影像語意分割系統的輸出。 由這些輸出結果顯示,大多數被錯誤分類的像素,都是位於兩個相鄰物件的邊界上。基於此觀察,本研究提出一種新穎的即時影像語意分割網路系統,它包含一個類感知邊緣損失函數模塊與一個通道關注機制,旨在提高系統準確性而不損害運行速度。

In Advanced Driver Assistance Systems (ADAS), image segmentation for recognizing drivable areas and guiding the vehicle forward is a basic function. For the latter, unlike those traditional image segmentation methods, image semantic segmentation based on deep learning architecture can handle the irregularly shaped road areas better, guiding a vehicle to drive in a more complex environment. In our research work, we first analyze the output of state-of-the-art real-time semantic segmentation networks. The result shows that most of the misclassified pixels are located on the edge between two classes. Based on this observation, we propose a novel semantic segmentation network which contains a class-aware edge loss module and a channel-wise attention mechanism, aiming to improve the accuracy with no harm to inference speed.