A Brain-Inspired Self-Organizing Episodic Memory Model for a Memory Assistance Robot

Chiao-Yu Yang, Edwinn Gamborino , Li-Chen Fu, Yu-Ling Chang

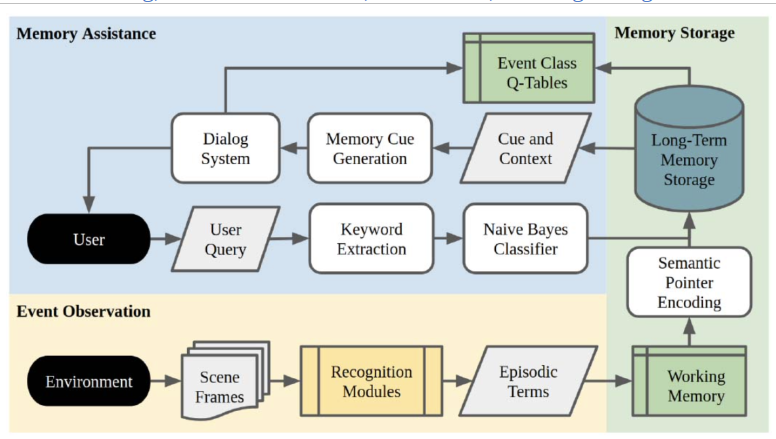

This paper presents a novel approach to memory assistance robots targeting the elderly. The aim is to develop a robot companion with an effective memory assistance system. To achieve this, an end-to-end memory assistance system is proposed and implemented on the Pepper robot, integrating event observation, memory storage, and personalized assistance. The essential component is a brain-inspired episodic memory model, which incorporates a hierarchical long-term memory storage model capable of memory clustering, merging, and summarization, mimicking human memory processes. Using reinforcement learning and social interaction, the robot learns effective cues for stimulating memory recall, offering tailored assistance. Positive user feedback validates the practical implications. The proposed model demonstrates promising results in experiments. The memory assistance robot significantly improves participants' recall accuracy for activities, providing valuable retrospection and strengthening memory. The self-organizing episodic memory model exhibits fast computing and adaptability on limited hardware. This research has potential implications for memory support and human-robot interaction. The developed brain-inspired memory model enhances the capabilities of memory assistance robots, benefiting elderly users and potentially extending to other applications requiring memory assistance.

Interactive Question-Posing System for Robot-Assisted Reminiscence from Personal Photos

Yi-Luen Wu, Edwinn Gamborino, Li-Chen Fu

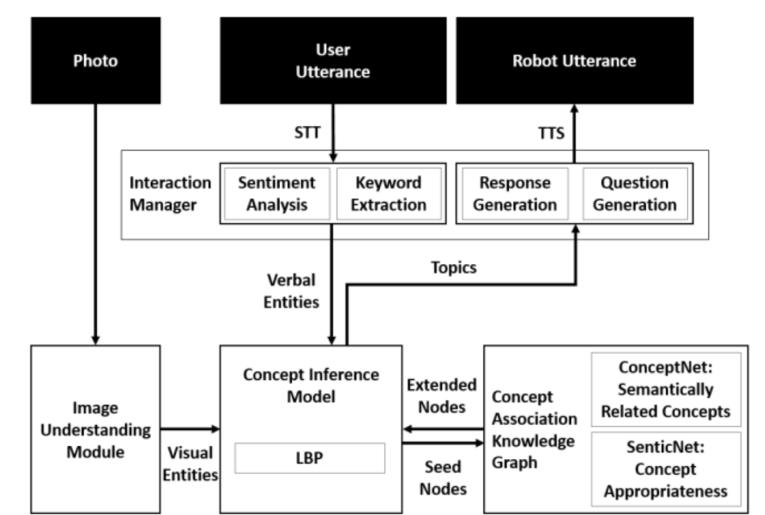

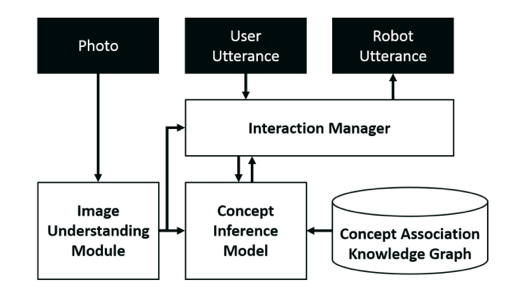

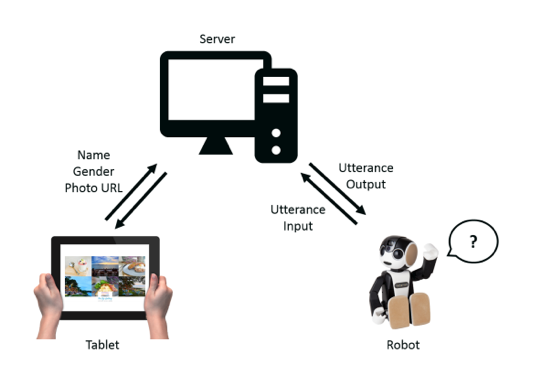

This paper presents the implementation of a robot companion that assists individuals in reminiscing about personal memories through the analysis of photos. The aim is to enable the robot to associate relevant concepts with the photo content and engage users through relatable questions. To achieve this, deep learning techniques are employed to recognize events, objects, and scenes in the photos, and a Markov Random Field-based algorithm incorporating common sense knowledge is utilized to infer associated concepts and autonomously generate semantically related and socially appropriate topics. The system poses appropriate questions based on these topics to guide users in reminiscing through conversation. An integrated, end-to-end robotic system capable of real-time user interactions is created. It can pose relevant questions and engage users effectively, providing the potential for organized reminiscing. Experimental validation demonstrates the high accuracy of the event recognition network on both a dataset and personal photos. The human-robot interaction experiment confirms the system's capability to ask coherent questions related to the image and previous user utterances, with the potential to guide memory recall. Although some results lack statistical significance, they exhibit a generally positive outcome, offering insights for improving question coherence in future studies.

Inferring Stressors from Conversation: Towards an Emotional Support Robot Companion

Yu-Cian Huang, Edwinn Gamborino, Yan-Jia Huang, Xiaobei Qian, Li-Chen Fu, Su-Ling Yeh

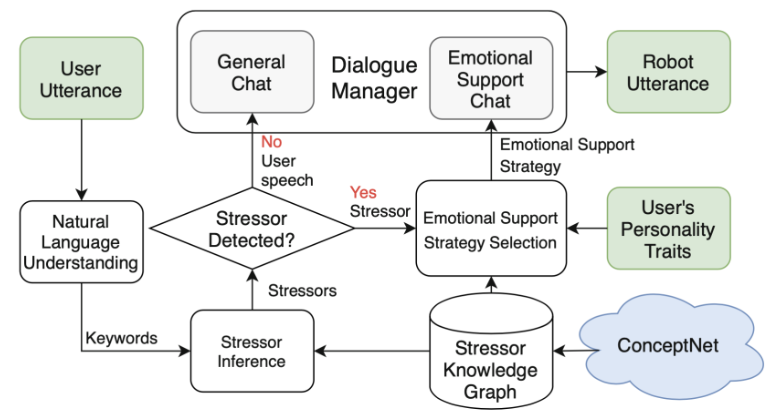

This paper presents a conversational robot system that provides emotional support to individuals facing stressful situations. The aim is to identify stressors from user speech and offer appropriate support strategies based on their detected stressors and personality traits. A novel stressor-specific knowledge graph derived from ConceptNet is created to enable the robot to infer stressors from verbal utterances and exhibit artificial empathy. The system then employs a pre-trained neural network to determine the most suitable emotional support strategy for the user with a specific personality. The proposed system achieves real-time interactions through an embodied robotic agent capable of engaging in naturalistic conversations, conducting small talks, and providing emotional support when stressors are detected. Evaluation results demonstrate the system's ability to accurately infer stressor categories, with a high average Likert scale score, and effectively predict suitable emotional support methods, achieving a high Dice coefficient. The system successfully identifies stressor categories from self-disclosure in human-robot interaction experiments and generates appropriate supportive responses. This paper addresses a research gap by developing an emotional support conversational agent in the context of social robotics, contributing to providing guidance and comfort in stressful situations.

Adaptive Recommendation Dialogue System through Pragmatic Argumentation

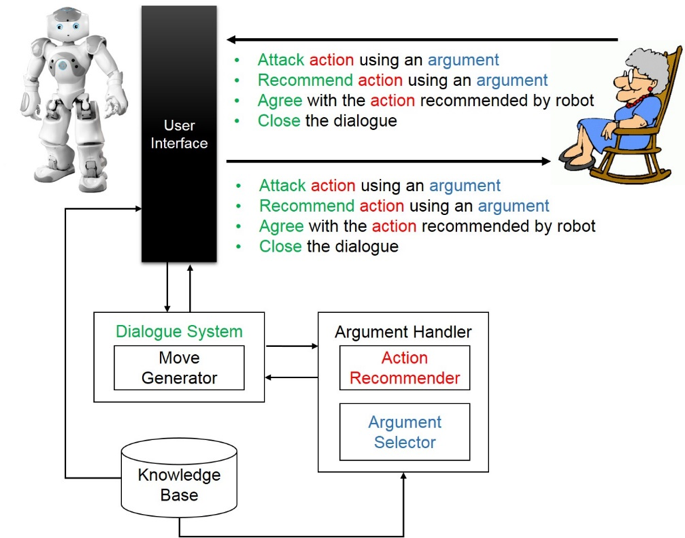

In an aging society, we expect that a robotic caregiver is able to persuade an elderly person to perform a healthier behavior. In this work, pragmatic argument is adopted to make a user realize that an action is really worthwhile. Therefore, an adaptive recommendation dialogue system through pragmatic argumentation is proposed. There are three objectives in this work: first, we build a knowledge base for pragmatic argument construction, and the knowledge base concerns not only the effect of an action but also the reason for the effect; second, the robot is endowed with the ability to recommend an action that adopts to different users, and the recommended action is determined based on the integration of both robot’s and user’s value systems. In other words, the robot knows how to compromise with the user; third, the robot will persuade the user through his/her value system, and it will try to select the perspective whereby the user can be more interested in the action recommended by the robot.

Interactive Question-Posing System for Reminiscing about Personal Photos

Reminiscence is a lifelong activity that happens throughout our lifespan. While memories can serve as the topics in people’s chit-chat, recalling the past can also help people to build self-esteem and increase the level if happiness. In our work, we apply deep learning techniques to recognize events, objects, and scenes in the image to understand the content in the photo. Then, we use Markov random field to consider the observations from the photo and the user utterance. Afterwards, we utilize the loopy belief propagation to infer possible associated concepts and topics. Finally, we give appropriate questions about the selected topics to RoBoHoN. The result show that the proposed system can pose proper and related question to interact with the user, and has a potential to help and guide the user to recall the past in an organized way.

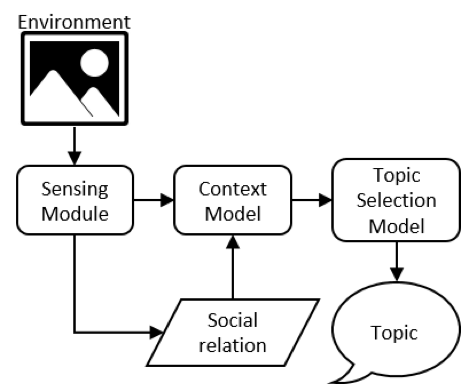

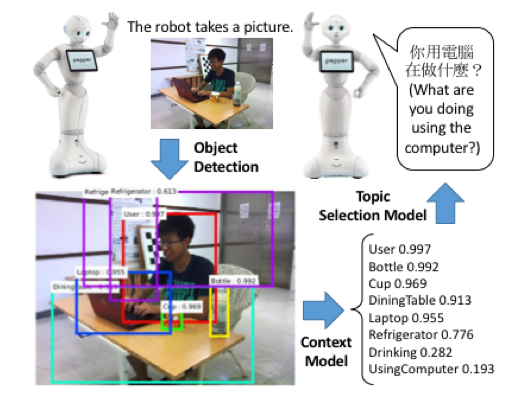

Context-aware Topic Proposal in Phatic Communion for Interaction Initiation of a Robot Companion

Recently, robot companions have become more and more popular; they are expected to provide not only physical assistance, but also social supports for human. In order to provide social connections and improve user experience, the capability to actively initiate an interaction in a natural way is important. When people try to engage with others, they often start from topics that are related to the context. In this work, we aim to develop a context-aware robot that initiate a non-task-oriented interaction with similar strategy. We focus on how a robot could interpret its environment as semantic concepts and then generate a proper topic proposal to start an interaction. With the help of object detection components developed in computer vision field, it can detect semantic entities in the environment, and combine such detection results using the commonsense knowledge to establish a context model based on hinge-loss Markov random field. Afterwards, according to the scores of each concept in the context model and the social relation with the user, the robot generates a sentence to initiate the interaction with the user. In the end, an experiment has been conducted to examine the effectiveness of the context-aware topics.

Multi-hop Fact-Checking via Graph Inference

Ti Chang [1], Tung-Yi Lin [1], Li-Chen Fu [1], Su-Ling Yeh [2]

[1] Department of Computer Science and Information Engineering,

National Taiwan University.

[2] Department of Psychology, National Taiwan University.

Large language models (LLMs) have become central to chatbot and knowledgebased QA applications but remain vulnerable to hallucinations—generating factually incorrect or contextually misaligned responses. Fact-checking systems provide a practical remedy, yet existing pipelines often face three major challenges: contextual loss during claim extraction, the need for multi-hop reasoning across documents, and limited precision when feeding full documents into LLMs. We propose a graph-based fact-checking framework that addresses these challenges. By integrating graph attention mechanisms over sentence-level evidence, our method captures fine-grained relationships across documents and enables interpretable multi-hop inference. Unlike traditional approaches that rely solely on ranked text spans, our framework leverages structural reasoning to better identify and integrate supporting facts. Experiments on multi-hop fact-checking benchmarks show that our method significantly improves support prediction and multi-hop reasoning performance. The results highlight the value of graph-based inference in improving both accuracy and interpretability in fact verification.

Keywords: Fact-Checking, Information Retrieval, Multi-Hop Question Answering, Multi-Hop Fact-Checking, Retrieval-Augmented Generation, Large Language Model

Multimodal Depression Detection through Conversational Interactions with an Emotion-Aware Social Robot: Pilot Study

Pu-Yu Liao, Yu-Quan Su, Xiaobei Qian, Yu-Ling Chang, Yun-Hsiang Lee, Li-Chen Fu

Depression affects more than 300 million people worldwide, yet existing diagnostic methods remain difficult to apply in frequent or large-scale screening. To address this gap, this study proposes DEPRESAR-Fusion, a lightweight multimodal depression detection framework for natural interactions with emotion-aware social assistant robots (SARs). The system integrates acoustic, linguistic, and visual features with an LLM-based response module that dynamically adapts conversational strategies. To enhance emotional expression during interaction, participants viewed emotionally evocative videos before engaging with the SAR, and to address data scarcity, training data were augmented using public depression-related social media corpora and LLM-generated synthetic samples. Evaluations on benchmark datasets for binary depression classification and PHQ-8 regression showed that emotional stimulation improved participants’ expressiveness and model performance, while DEPRESAR-Fusion outperformed prior multimodal baselines across tasks. These findings suggest that combining emotion induction, data augmentation, and lightweight multimodal fusion can support accurate and scalable depression detection in real-world SAR settings.

KG-Guided Proactive Questioning for LLMs in Multi-turn Interactive Medical Reasoning

Yu-Chen Li, Tzu-Ni Yang, Li-Chen Fu, Yung-Jen Hsu

While Large Language Models (LLMs) have demonstrated significant potential in medical reasoning, existing research is often conducted under the idealized assumption of complete information, which contrasts with the reality of incomplete information in clinical practice. To address this gap, this paper proposes a novel interactive reasoning framework designed to empower LLMs with the ability to proactively ask questions to gather critical information. Our core methodology involves a Knowledge Graph (KG) Reasoner that explores a professional medical KG to identify the most crucial information gaps, thereby providing strategic guidance for the LLM’s questioning. Furthermore, we introduce a confidence estimation mechanism inspired by the clinical process of differential diagnosis, enabling the system to accurately assess its own uncertainty and trigger questions when necessary, rather than making premature decisions. To validate our approach, we conducted experiments on an interactive medical question-answering benchmark. The results demonstrate that, compared to existing baselines like MedIQ, our framework can effectively gather key patient information through more strategic questioning and avoid errors caused by premature diagnostic closure. Our model achieves a significant improvement in final question-answering accuracy, proving that the proposed KG-guided questioning strategy is a viable path toward making AI models more aligned with real-world clinical workflows, thereby enhancing their reliability and safety.